The 2025 Microsoft New Future of Work Report

Episode 17 explores an all-AI Future of Work report. Maybe they’re behind target on Copilot subscriptions?

For the past four years, Microsoft’s annual Future of Work Report has been our go-to resource for figuring out what’s changing about how people work. Coming out of the pandemic, a lot of things shifted as we returned to the office but brought with us all the technology and workflow changes that resulted from remote work. So when the 2025 report came out, it was a no brainer for us to add it to the podcast schedule.

Microsoft’s massive scale and distributed customer base gives them data that nobody else has. And these reports have been both timely and well-executed, providing the most up-to-date information about work trends we’ve seen.

Unfortunately, every single slide in the report is about AI. And to be fair, AI came a long way in 2025 (and looks poised to go even further in 2026), but we were hoping to get to talk about new trends in hybrid work, or how chat and remote meetings have continued to evolve. But there’s still enough in this report to be interesting, and we’ll supplement it with some non-AI topics from our own product usage data that might surprise you!

The Good News: AI Can Make Us Better

The most optimistic finding in the report comes from a Harvard Business School study conducted at Procter & Gamble. Researchers put about 700 people through a product development brainstorming exercise and tested four different configurations: individuals working alone, individuals working with AI, two-person pairs (one from commercial, one from R&D), and two-person pairs working with AI. Judges evaluated all of the ideas using measures of novelty, practicality, and potential business impact.

The two-person teams with AI assistance came up with the best ideas, by a solid margin. So AI helped make people better, but as an extra teammate, not a replacement for either of the humans.

This suggests AI’s biggest value is still augmenting human teams, which fits the “this is a new productivity-enhancing tool in our toolkit” story that has taken us from subsistence farming to modern life. Though past technological shifts have been disruptive, we’ve gone through them before and come out wealthier and with better lives on the other side.

For those of us worried about AI replacing workers wholesale, this is reassuring.

But AI Is Already Better Alone, Sometimes

That said, the report includes some research that makes me feel both anxious and excited at the same time.

One of the most credible-seeming studies referenced in the report looked at doctors making diagnoses across a variety of specialties and evaluated how frequently the diagnoses were correct. When doctors worked alone, they got the right answer 75% of the time. When they used AI optimally (making their own diagnosis first, then using AI to challenge their assumptions rather than just provide answers), accuracy jumped to 84%. That’s great news for AI-assisted medicine, and that was the lead message in the report.

But looking past the headline and diving into the data showed something even more interesting: AI alone got the right diagnosis 90% of the time.

So if you’re a patient, this is (probably) very good news! After all, AI medicine was able to improve diagnosis accuracy from 75% to 90%. That means you’re less than half as likely to get treated for the wrong thing, which is an unequivocally better outcome.

But if a core competency of doctors, one of the hardest professions humans have, can be done better by computers, what else will be left? We’re certainly not at the point where human doctors are obsolete, but something that seemed impossible even a year ago suddenly seems… maybe on the horizon? It’s a stark reminder that AI capabilities are advancing fast.

Consider the Source

Before we take all these findings as gospel, it’s worth noting where the data comes from. A significant majority of the AI-favorable statistics in the report are sourced from OpenAI, Anthropic, or Microsoft’s own Copilot research. And all of these companies have a lot of reasons to want you to believe that you need to buy AI tools.

So what I didn’t see anywhere in the report were many of the studies showing that AI costs people extra time. And those exist. We talked in a previous episode about studies showing the overhead of reviewing AI output, checking for hallucinations, and fixing subtle errors sometimes taking 15-20% more time than just doing the task outright.

Though the headline from Microsoft’s report was basically “AI saves everyone time on almost everything,” the reality is not so clear. So I’m going to take the optimistic findings seriously, but not take them as gospel.

A Non-AI Trend: More Meetings than Ever

In past years of the report, Microsoft’s team summarized research that showed how people work, which tools they use, and how they spend their time. This year, we didn’t get any of that, so we’re going to take a quick break from the Future of Work report to discuss some findings from a study we did from our own Calendar Insights tool in Boomerang.

During the pandemic, it felt like I lived in Zoom. Every time I wanted to talk with a colleague in real-time, I had to put something on the calendar so we could join a virtual meeting. Admittedly, lots of work that might have been done in meetings or work sessions got done over Slack or Email instead, but when even game nights with friends were virtual, it certainly felt like we had more meetings.

But the data showed us the pandemic actually triggered a decline in the number of meetings that were on our calendars. Turns out, a lot of those meetings could have been an email! And when it was time to mask up, they were.

But like many pandemic trends, this one’s reversed. We’ve since added all those meetings back, plus more. There were more meetings on average in 2025 than there have ever been before.

Like entropy in the universe, meetings trend toward infinity.

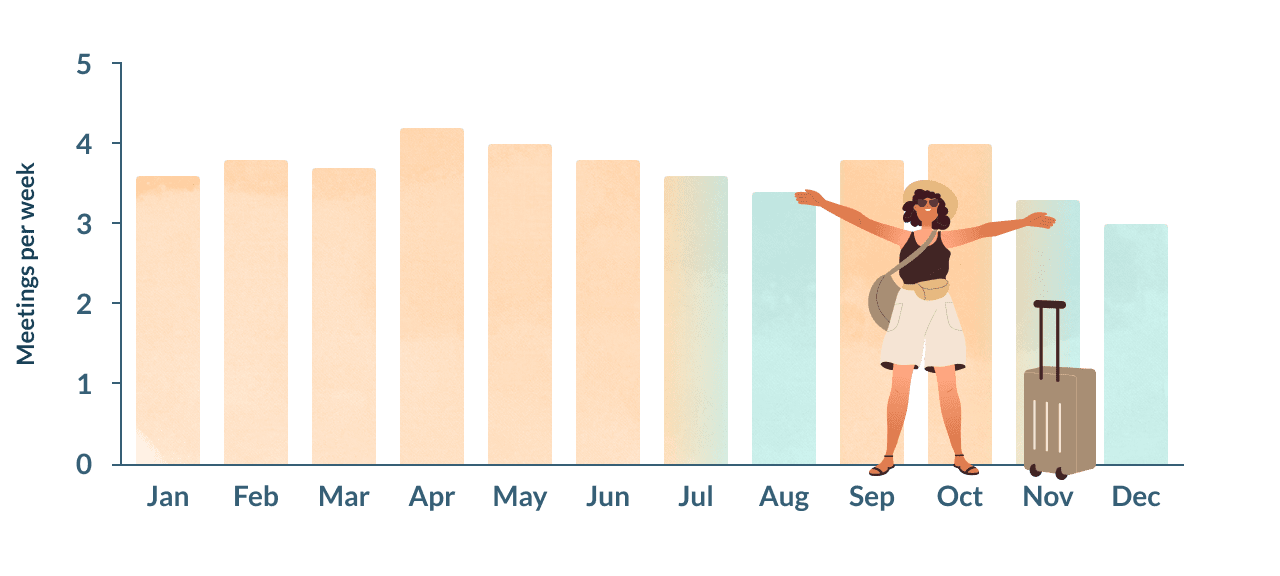

We also looked at trends for when people schedule the most meetings. Conventional wisdom holds that the summer tends to be a dead time, with kids out of school and vacation on everyone’s mind.

The data shows that “summer” - at least as far as people’s meeting habits, really means “August.” June was actually a busier meeting month than average, with July in the middle. December, as you might guess, had the fewest meetings of all due to the holidays.

Opportunities Are Knocking

The report did a nice job of identifying a few areas that are going to need new workflows or new tools to help us work more effectively with AI.

The first opportunity is multiplayer AI. Right now, most AI tools are designed for individuals. You have your ChatGPT session, your Claude code instance, your Gemini app. These are personal. They don’t know what your teammates are working on, and they can’t participate in group dynamics. And while you can share chat histories with someone on your team, there’s not a good way to collaborate on prompts or provide extra context in either direction.

The report points toward a future where that changes. Imagine AI that participates in meetings thoughtfully, knowing when to interject and when to stay quiet. That AI could know when to be a devil’s advocate, when to mediate a disagreement, or when there’s something going on in another team that would have a meaningful impact on the discussion. That AI could also handle meeting handoff, summarizing what finance needs to know from what was discussed in the marketing meeting without anyone having to write it up. The research suggests people are surprisingly open to AI playing these roles, as long as it does so respectfully.

Another major opportunity is making tools and workflows for reviewing work at scale. For most of human history, creating things was harder than reviewing them. It took longer to write a report than to read and critique one.

With AI, that’s flipped. AI content creation is fast. You can generate a grammatically correct 10 page document in seconds. This creates a new problem, which the report calls “work slop.” Even content that is pure garbage now looks professional, so our shortcuts for identifying high quality work no longer serve us. We’re going to have to figure out how to work in a world where the bottleneck is now identifying quality and reviewing details.

For example, in software, there are literally thousands of code editors, likely tens of thousands. But good code review tools are few and far between. The industry has invested heavily in making production easier, but the tools for reviewing code output are still primitive. Now that AI is writing a lot of the code, the value of a great code editor has diminished a lot, and the value of a great code review tool is much higher. Hopefully we’ll see a lot more effort put into tools for code review as we move forward!

Tips of the Week

Though a lot of the report was theoretical rather than practical, there were a couple of useful takeaways for interacting with AI that I haven’t seen before.

One useful finding: the practice of “grounding,” or giving extra context to AI in your prompt, improves AI outputs.

AI chatbots are designed to give you a lot with little effort. Type a short question, get a comprehensive answer. That’s the interaction style that creates the most engagement. But it’s not the style that creates optimal output for you. The more context you give AI, such as what you’re trying to accomplish, what constraints you’re working with, what you’ve already tried, what information you have, etc. the better results you will get.

If you’re not sure what’s relevant, ask the AI to ask you questions. “I want to write a proposal for X. What questions would you need answered to help me write it well?” Let it interview you first, then ask for the actual proposal. AIs don’t behave like this by default, because it increases friction and reduces usage. But when the output quality matters, it’s a good technique to keep in your back pocket.

The report also talks about how to engage with AI while still building/maintaining your skills. Again, it’s not as easy as type in a short question and get a comprehensive answer - you have to use the AI to challenge your own thinking rather than outsourcing the thinking to it.

But “deskilling” is a real risk for anyone incorporating AI into their workflow. One of the studies cited in the report showed that doctors who used AI assistance, when later tested without it, performed worse than before they started using AI. Their diagnostic skills had atrophied from disuse. Doctors who used prompts like “Here’s my approach. What are the weaknesses in this thinking?” did not see the same effect.

The bonus is that this approach also produces better outputs. AI is trained to be agreeable. Ask “Is this good?” and it will tell you that you’re brilliant. Ask “What’s wrong with this?” and you’ll get genuinely useful feedback.

Whew! That’s hopefully it for AI for a little while. We hope y’all are keeping your New Year’s resolutions into February.

To hear the full discussion, listen to Episode 17 of Less Busy Lab. Have questions? Send them to questions@lessbusylab.com